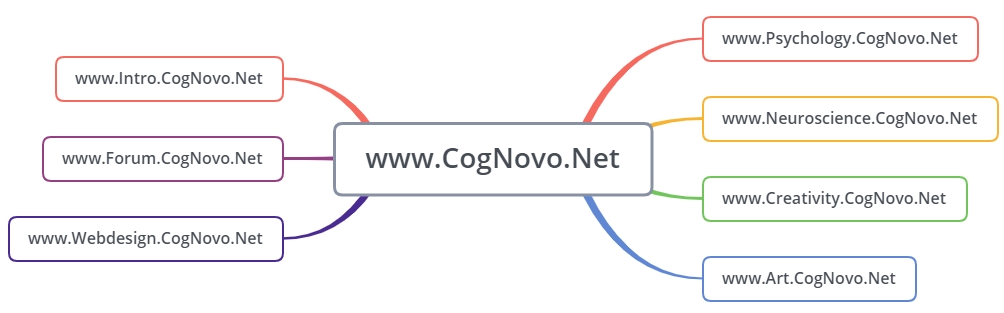

The CogNovo Network logo has a deeper semantic and hermeneutical interpretative meaning,. Its topology symbolises “decentralised cognitive liberty” which is a condicio sine qua non for creativity, unfoldment of psychological potential, and brain development (i.e., neuro/synapto-plasticity,

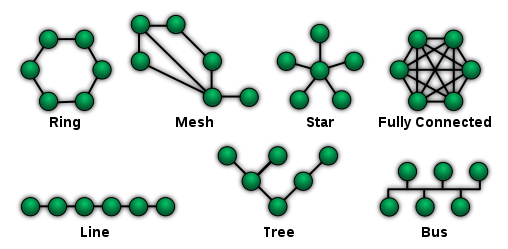

Cf. Sensory summation/binding and the formation of higher-order abstract cognitive concepts., , etc.). The logo consists of a fully connected network which is also known as a non-centralised/non-hirachical full mesh topology in network science. In this specific network topology all nodes are connected to each other (viz., exhaustive interconnectivity). In graph theory this specific arrangement is termed a “complete graph” and the number of connections grows quadratically based on the number of network-nodes. This inclusive relational principle can be mathematically formalised as follows:

This document provides a comprehensive primer on various network typologies and contains numerous code-snippets for their implementation in R (statistical open-source software).

See also: Lewin, K. (1936). Principles of topological psychology. New York: McGraw-Hill.

Full-text: archive.org/details/PrinciplesOfTopologicalPsychology/

A fully connected network thus possess a non-hierarchical structure without any “centralised authority” and it possess a high degree of reliability/robustness due to the large number of redundant pathways. Besides its generic biological pertinence, the idea of equal distribution without any centralisation has obvious far-reaching political and philosophical implications as it is a truly liberal and democratic topology which allows for an “open dialogue” independent of any “top-down regulation”.

The idea of interconnectivity is also pertinent for the conceptualisation of interdiscipinary research, c.f.: holism.ga

Furthermore, it is an important idea in the context of creativity research. In neuroscience the concept of “spreading neuronal activation” is crucial for information processing in the brain (e.g., associative processes related to semantics, concept formation, and cognitive schemata). In a fully connected mesh topology information can spread freely (without inhibition/depression) and therefore ‘co-activate’ other nodes in the network. This ‘free flow’ of information (ideas/memes) is crucial for creativity and cognitive innovation – specifically in social systems (cf. cybernetics & quasi-evolutionary algorithms).

Join the forum to discuss – freely: forum.cognovo.net

also visit a related project of mine: cognitive-liberty.online

, 8(12), 976–987.

Plain numerical DOI: 10.1038/nrn2277

DOI URL

directSciHub download

Show/hide publication abstract

Use the mouse to interact with the virtual objects.

Source Code

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 |

import * as THREE from 'three'; import { OrbitControls } from 'three/addons/controls/OrbitControls.js'; import { RGBELoader } from 'three/addons/loaders/RGBELoader.js'; import { GUI } from 'three/addons/libs/lil-gui.module.min.js'; import Stats from 'three/addons/libs/stats.module.js'; let camera, scene, renderer, stats; let cube, sphere, torus, material; let cubeCamera, cubeRenderTarget; let controls; init(); function init() { renderer = new THREE.WebGLRenderer( { antialias: true } ); renderer.setPixelRatio( window.devicePixelRatio ); renderer.setSize( window.innerWidth, window.innerHeight ); renderer.setAnimationLoop( animation ); renderer.toneMapping = THREE.ACESFilmicToneMapping; document.body.appendChild( renderer.domElement ); window.addEventListener( 'resize', onWindowResized ); stats = new Stats(); document.body.appendChild( stats.dom ); camera = new THREE.PerspectiveCamera( 60, window.innerWidth / window.innerHeight, 1, 1000 ); camera.position.z = 75; scene = new THREE.Scene(); scene.rotation.y = 0.5; // avoid flying objects occluding the sun new RGBELoader() .setPath( 'textures/equirectangular/' ) .load( 'quarry_01_1k.hdr', function ( texture ) { texture.mapping = THREE.EquirectangularReflectionMapping; scene.background = texture; scene.environment = texture; } ); // cubeRenderTarget = new THREE.WebGLCubeRenderTarget( 256 ); cubeRenderTarget.texture.type = THREE.HalfFloatType; cubeCamera = new THREE.CubeCamera( 1, 1000, cubeRenderTarget ); // material = new THREE.MeshStandardMaterial( { envMap: cubeRenderTarget.texture, roughness: 0.05, metalness: 1 } ); const gui = new GUI(); gui.add( material, 'roughness', 0, 1 ); gui.add( material, 'metalness', 0, 1 ); gui.add( renderer, 'toneMappingExposure', 0, 2 ).name( 'exposure' ); sphere = new THREE.Mesh( new THREE.IcosahedronGeometry( 15, 8 ), material ); scene.add( sphere ); const material2 = new THREE.MeshStandardMaterial( { roughness: 0.1, metalness: 0 } ); cube = new THREE.Mesh( new THREE.BoxGeometry( 15, 15, 15 ), material2 ); scene.add( cube ); torus = new THREE.Mesh( new THREE.TorusKnotGeometry( 8, 3, 128, 16 ), material2 ); scene.add( torus ); // controls = new OrbitControls( camera, renderer.domElement ); controls.autoRotate = true; } function onWindowResized() { renderer.setSize( window.innerWidth, window.innerHeight ); camera.aspect = window.innerWidth / window.innerHeight; camera.updateProjectionMatrix(); } function animation( msTime ) { const time = msTime / 1000; cube.position.x = Math.cos( time ) * 30; cube.position.y = Math.sin( time ) * 30; cube.position.z = Math.sin( time ) * 30; cube.rotation.x += 0.02; cube.rotation.y += 0.03; torus.position.x = Math.cos( time + 10 ) * 30; torus.position.y = Math.sin( time + 10 ) * 30; torus.position.z = Math.sin( time + 10 ) * 30; torus.rotation.x += 0.02; torus.rotation.y += 0.03; cubeCamera.update( renderer, scene ); controls.update(); renderer.render( scene, camera ); stats.update(); } |

CogNovo Web-Forum

cognovo-webforum-synopsis